Game Playing: The Sliding Tiles Puzzle

The Game Setup

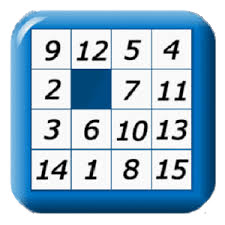

In this project we'll look at a one-person game that was popular in my childhood. It's a bit like the Rubic cube which came much later and which is more challenging. Here is a picture of one.

The game has a small platform with generally 16 slots in a 4 by 4 square. There are 15 tiles usually numbered from 1 to 15, one per slot. This leaves an empty slot. Any tile adjacent to the empty slot may slide into it, which then makes the tiles previous slot empty. The object is to slide tiles, one at a time, to bring them into order. The order is first by row and then by column, with the empty slot at the end.

Animation of puzzle being solved. Return with the browser's Back button) You can download this zip file for this project

To keep things simple we'll work with a 3x3 game with tiles numbered 1 to 8. Here is an example of a game in its final (solved) configuration.

1 2 3 4 5 6 7 8

The Representation of a Board configuration

As we'll see, during the search for a solution many thousands of partial solutions may be floating around in our workspace. It's important to keep these partial solutions as small as we can. A simple 9 character string does nicely. Here is an example to show how a 3x3 board might look.

42_713856Every configuration string is 9 (3^2) characters long. Each digit (1-8) is uniquely present and the underscore is used to indicate the empty slot. The string is simply ordered by row and then by column. The two goal solutions is 12345678_.

A Class for Helper Functions

We will build some small programs that both create and solve 3x3 configurations. To get better code reuse the common elements have been gathered into a class containing common function and attributes. Let's look at these now.

1 # tiles.py

2 #

3 import sys

4

5 class TileGame :

6 def __init__ (self, sampleBoard) :

7 self.legalMoves = legalMoves3

8 self.goal = "12345678_"

9 self.dim = 3

10 self.makeManhatten()

11The class name is TileGame and when an instance is created a sample board is passed to solve. A table is created with the Manhatten distances between all squares. The legal moves into each square from its neighbors is attached to the game instance. The dimension dim is always 3x3 for this version of the project but can be expanded to a 4x4 game.

Now let's create a game with a start board and then examine the attributes set up.

$ python

>>> import tiles

>>> startBoard = "4321_5678"

>>> game = tiles.TileGame(startBoard)

>>> game.goal

'12345678_'

>>> game.dim

3Let's look at the methods (functions) within TileGame.

12 def getMoves(self, board) :

13 empty = board.find('_')

14 return empty, self.legalMoves[empty]

>>> game.getMoves(startBoard)

(4, (1, 3, 5, 7))The method getMoves uses the legalMoves table to return a tuple with the slot that is empty and the slots from which a tile may be slid into it.

16 def makeMove(self, board, empty, mov) :

17 lbrd = list(board)

18 lbrd[empty],lbrd[mov] = lbrd[mov],lbrd[empty]

19 return "".join(lbrd)

20

>>> game.makeMove("4321_5678", 4, 7)

'4321756_8'The method makeMove creates a new board by moving a tile to the empty square. In line 17 the old board is made into a list that can be modified. Line 18 the contents are exchanged and in line 19 temporary list is converted back to a string.

21 def futureCost(self, board) :

22 # estimate future cost by sum of tile displacements

23 future = 0

24 for sq in range(self.dim*self.dim):

25 occupant = board[sq]

26 if occupant != '_' :

27 shouldbe = self.goal.find(occupant)

28 future += self.manTable[(sq,shouldbe)]

29 return future

30The method futureCost uses the "manhatten distance" which is distance between two points when you are confined in moving only along the x or y axis as in the streets of Manhatten. futureCost returns the sum of these distances for each tile to its proper locatino in the goal.

31 def makeManhatten(self) :

32 self.manTable = {}; Dim = self.dim

33 for aa in range(Dim*Dim) :

34 for bb in range(Dim*Dim) :

35 arow = aa//Dim; acol=aa%Dim

36 brow = bb//Dim; bcol=bb%Dim

37 self.manTable[(aa,bb)] = abs(arow-brow)+abs(acol-bcol)

38The manTable dictionary is set up when the game instance is initialized. Obviously, it makes a difference whether the game is 3x3 or 4x4. The keys in the dictionary are tuples of 2 two slot positions. The values the distance between them. Notice in line 37 the use of the abs function which to guarentee that "There are no Negative Distances".

Finally, a simple utility method to print a board to the screen. The tiles are laid out in rows with even spacing between columns (line 49).

39 def printBoard(self, board, mesg="", sep="\f") :

40 if sep : print(sep)

41 if mesg : print(mesg)

42 expand = ["%02s"%x for x in board]

43 rows = [0]*self.dim

44 for i in range(self.dim) :

45 rows[i] = "".join(expand[self.dim*i:self.dim*(i+1)])

46 print("\n".join(rows))

47

>>> game.printBoard("4321_5678", "At move zero")

At move zero

4 3 2

1 _ 5

6 7 8The slots are numbered to align with the Python zero-based array and string indexing.

0 1 2 3 4 5 6 7 8

Also in tiles.py are a couple of extras including tables of legal moves for both the 3x3 and 4x4 games and a function to trap errors and exit with error

48 def scream(error) :

49 sys.stderr.write("Error: %s\n" % error)

50 sys.stdout.write("Error: %s\n" % error)

51 sys.exit(1)

52

53 legalMoves3 = ( # for a 3x3 board

54 (1,3 ), # these can slide into square 0

55 (0,4,2), # these can slide into square 1

56 (1,5), # these can slide into square 2

57 (0,4,6), # these can slide into square 3

58 (1,3,5,7), # these can slide into square 4

59 (2,4,8), # these can slide into square 5

60 (3,7), # these can slide into square 6

61 (4,6,8), # these can slide into square 7

62 (5,7)) # these can slide into square 8

63

64 legalMoves4 = ( # for a 4x4 board

65 (1,4 ), # these can slide into square 0

66 (0,5,2), # these can slide into square 1

67 (1,6,7), # these can slide into square 2

68 (2,7), # these can slide into square 3

69 (0,5,8), # these can slide into square 4

70 (1,4,6,9), # these can slide into square 5

71 (2,5,7,10), # these can slide into square 6

72 (3,6,11), # these can slide into square 7

73 (4,9,12), # these can slide into square 8

74 (5,8,10,13), # these can slide into square 9

75 (6,9,11,14), # these can slide into square 10

76 (7,10,15), # these can slide into square 11

77 (8,13), # these can slide into square 12

78 (9,12,14), # these can slide into square 13

79 (10,13,15), # these can slide into square 14

80 (11,14)) # these can slide into square 15The Search for the solution

What makes this game interesting is that the search space, even for the 3x3 version of the puzzle contains enough possibilites that we need be quite clever in our approach. The number of possible node configurations in the 3x3 puzzle is 9 factorial or 362880. (why?). For a 4x4 puzzle the number would zoom to 20922789888000.

We'll look at doing the two brute force searches and then optimize them with 2 interesting algorithms that will greatly prune the results as the work proceeds. These are the Greedy and the A-star algorithms both of which use a heuristic to estimate the "goodness" of any particular configuration.

Intermediate results are stored in a priority queue in order of their "goodness". The "best" configurations move to the front of queue and get immediate follow-up for the next moves. Less promising wither away.

Generating Puzzles

When you bought one of these puzzles, it came with the tiles in order. You then could slide the tiles around to scramble them into a puzzle to be solved.

The little program tilesScramble.py does a random walk (drunkards walk) of the empty space for the number of steps input and outputs a new configuration.

1 # tilesScramble.py

2 #

3 # Scramble the solution by making N random moves.

4

5 def scramble(times) :

6 import tiles, random

7 board = "12345678_"

8 game = tiles.TileGame(board) # the goal board

9 used = {}

10 while times > 0 :

11 used[board] = True # mark as taken

12 empty, moves = game.getMoves(board)

13 move = random.choice(moves)

14 nextBoard = game.makeMove(board,empty,move)

15 if used.get(nextBoard) : continue

16 board = nextBoard

17 times -= 1

18 return board

19

20 def main() :

21 import sys, sarg

22 moves = sarg.Int("moves",15) # Number of times to randomly move

23 print(scramble(moves))

24

25 if __name__ == "__main__" : main()

26A TileGame object is created (line 8) and the initial board set to the goal. The while loop (line 10) steps the appropriate number times randomly choosing a legal move at each step and also avoiding looping (line 15).

Below are a couple of sample runs. The number of random moves to make is taken using the sarg module (line 22). The default is 15 moves

$ python tilesScramble.py moves=10

2731_5846

$ python tilesScramble.py

5_1463827You might well wonder why go this trouble when we could convert the goal to a 9 element list, use random.shuffle on it. Then bring it back to a string. The problem is that we're apt to get something that can't be solved. With the program we have something we know can be solved.

Representing Game Nodes

There is more information that must accompany the layout of the tiles. I want to take the approach of keeping this information in a tuple rather than creating an object class for the game nodes. One reason is for speed and size. Another is that by ordering the items in the tuple we will automatically get a very simple insertion into a priority queue.

Each tuple representing a game node will have the following 4 items in the order shown.

- The expected cost based on both past moves an and estimate of future moves

- The number of past moves so far

- The 9 character configuration of the board

- A pointer to the parent node of this node or None for the start node

The ordering of the information in the tuple is very important. If you sort a set of node tuples, the order you get is by "least expected overall cost".

Adding Heuristics to Solution Search

We'll take a give board configuartion and generate its "children" or successor boards. These will be reinserted into the priority queue.

With heuristics we can estimate how "good" a partial solution this, that is, how close it is likely to be to the final solution and insert it into the priority queue accordingly.

This is the heart of the A-star algorithm. Rather than depth-first or breadth-first, we use a priority queue to reinsert a partial result ahead of others whose "estimated overall cost" is greater. We always take from the front of the queue, that is, the partial solution with the least expected cost.

The "future expected cost" is of course the futureCost method in tiles.py above.

The Search Itself

The progam tilesSearch.py is shown below and will be explained in detail.

1 # tilesSearch.py

2 #

3 # Search for sequence of moves to bring a randomized tile puzzle

4 # into order

5

6 import sys, sarg, time

7 from tiles import TileGame

8

9 try:

10 import Queue as Q # python ver. < 3.0

11 except ImportError:

12 import queue as Q

13

14 def main() :

15 board = sys.argv[1]

16 ratio = sarg.Int("ratio",2)

17 timed = sarg.Int("time",0)

18 game = TileGame(board)

19 startTime = time.time()

20 path = search(game,board,ratio)

21 elapsed = time.time()-startTime

22 if timed : timeMsg = "Search took %s secs" % elapsed

23 else : timeMsg = ""

24 print("tilesSearch.py: Moves=%s %s" % (len(path),timeMsg))

25 for entry in path : print(entry)

26Function main gets a board and two optioal values from the command line. (line 15-17) A ratio used to balance the number of past moves to the overall futureCost calculated as a summation of manhatten distances to the goal state as explained above. The startTime is set from the system clock (line 19). The function search is then called with an initialized game instance followed by the parameters needed.

The search is also timed and output on request. (line 22). The path returned is printed.

The search function is similar to tilesScramble.py

27 def search(game, board, ratio) :

28 closed = {}

29 queue = Q.PriorityQueue()

30 origCost = game.futureCost(board)*ratio

31 orig = (origCost,0,board,None) # (cost,nMoves,board,parent)

32 queue.put(orig)

33 closed[orig] = True

34 expanded = 0

35 solution = NoneThe setup phase creates a closed dictionary to prevent looping, and a priority queue. The first node is queued, orig, is queued and we're ready to start.

36 while queue and not solution :

37 parent = queue.get()

38 expanded += 1

39 (parCost, parMoves, parBoard, ancester) = parent

40 empty, moves = game.getMoves(parBoard)

41 for mov in moves :

42 childBoard = game.makeMove(parBoard,empty,mov)

43 if closed.get(childBoard) : continue

44 closed[childBoard] = True

45 childMoves = parMoves+1

46 childCost = game.futureCost(childBoard)*ratio + childMoves

47 child = (childCost,childMoves,childBoard,parent)

48 queue.put(child)

49 if childBoard == game.goal : solution = childThe search loop (lines 36) gets the next node from the queue (line 37) and expands it (line 40). Legal moves from this position each have their cost evaluated (line 46) using both the futureCost function and the moves used so far. These are reinserted back into the queue. (line 48). We break on the first solution found. If no solution is found the queue runs dry. (Failure)

50

51 if solution :

52 print("%s entries expanded. Queue still has %s" % \

53 (expanded, queue.qsize()))

54 # find the path leading to this solution

55 path = []

56 while solution :

57 path.append(solution[0:3]) # drop the parent

58 solution = solution[3] # to grandparent

59 path.reverse()

60 return path

61 else :

62 return []

63If we get a solution (line 51), it is reconstructed from the linkages to parent nodes. But that is the solution in reverse. This is fixed in line 59 and the path returned. No solution results in an empty path being return.

Testing various Strategies

In this section we'll look at solving the puzzle by simple breadth-first search, and then with an intelligent heuristic. The breadth-first search does not consider future expection but only moves-so-far in the nodes produced. By setting the "future-ratio" to zero we get exactly that. The puzzle we'll use is solved in just 15 moves.

$ python tilesSearch.py 1234_5678 ratio=0 time=1

2391 entries expanded. Queue still has 1297

tilesSearch.py: Move= 15 Search took 0.0591 secs

(0, 0, '1234_5678')

(1, 1, '12345_678')

(2, 2, '12345867_')

(3, 3, '1234586_7')

(4, 4, '123458_67')

(5, 5, '123_58467')

(6, 6, '1235_8467')

(7, 7, '1235684_7')

(8, 8, '12356847_')

(9, 9, '12356_478')

(10, 10, '1235_6478')

(11, 11, '123_56478')

(12, 12, '123456_78')

(13, 13, '1234567_8')

(14, 14, '12345678_')The solution to the puzzle is just 15 moves but 2391 nodes were evaluated. The time, about 1/16 of a second is testimony to the speed of modern laptops.

Now let's run this for various values of "future-ratio". The results are a bit surprising. For brevity I have trimmed off the details of the 15 moves. There are always the same

$ python tilesSearch.py 1234_5678 time=1 ratio=0

2391 entries expanded. Queue still has 1297

tilesSearch.py: Moves=15 Search took 0.0508 secs

$ python tilesSearch.py 1234_5678 time=1 ratio=1

128 entries expanded. Queue still has 86

tilesSearch.py: Moves=15 Search took 0.0032 secs

$ python tilesSearch.py 1234_5678 time=1 ratio=2

90 entries expanded. Queue still has 64

tilesSearch.py: Moves=15 Search took 0.002 secs

$ python tilesSearch.py 1234_5678 time=1 ratio=3

110 entries expanded. Queue still has 77

tilesSearch.py: Move= 15 Search took 0.0029 secsThe optimum value for the ratio appears to be 2. But the lesson is that any consideration of the future cost vastly improves the performance both in time and CPU effort. Up to thirty to one. It's all about the ordering of partial solutions within groups with the same number of moves already taken.

The Greedy Algorithm

Finally, I'd like to look at one other algorithm that is somewhat simpler. This algorithm simply chooses the best child of its current node and never backtracks. It needs no queue and if it succeeds it is very very fast. tilesGreedy.py can get stuck and fail to solve a puzzle. But this is one it can. And we can compare it with the A-star solution.

01 # tilesGreedy.py

...

08 def search(game, board) :

09 path = []

10 closed = {} # boards already seen

11 closed[board] = True # don't return (avoid loops)

12 while True :

13 path.append(board)9

14 if board == game.goal : break

15 empty, moves = game.getMoves(board)

16 candidates = []

17 for mov in moves :

18 child = game.makeMove(board,empty,mov)

19 priority = game.futureCost(child)

20 if not closed.get(child) :

21 candidates.append( (priority,child) )

22 closed[child] = True

23 if candidates :

24 candidates.sort()

25 board = candidates[0][1] # choose lowest cost board for next

26 else :

27 print "Cannot move from ",board

28 break

29 return pathHere there is no priority queue. The program looks only at expected future cost of the children nodes from a partial solution (line 19). It takes the candidates and simply gets the best by sorting them and taking the first of the list. (lines 24-25). Let's test it.

$ python tilesGreedy.py 1234_5678 time=1

tilesGreedy.py: 1234_5678 moves=15 Search took 0.0003 secs

1234_5678

12345_678

12345867_

1234586_7

123458_67

123_58467

1235_8467

1235684_7

12356847_

12356_478

1235_6478

123_56478

123456_78

1234567_8

12345678_Ten times faster than A-star. And 300 times faster than breadth-first.

Here is a case where the both algorithms found a solution but the greedy solution is not optimum. It meanders through 59 moves instead of 23 for the A-star

$ python tilesGreedy.py 75126348_ time=1

tilesGreedy.py: 75126348_ moves=59 Search took 0.0014 secs

$ python tilesSearch.py 75126348_ time=1

414 entries expanded. Queue still has 260

tilesSearch.py: Moves=23 Search took 0.0105 secsYou can download this zip file for this project

Have Fun. If you have comments or suggestions You can email me at mail me

Copyright © 2014-2021 Chris Meyers and Fred Obermann

* * *